We then consider improving the algorithm efficiency for tetrahedralizing large-scale geometries.

It relies on three core principles: hybrid geometric kernel, tolerance of the mesh relative to the surface input, and iterative mesh optimization with guarantees on the output validity.

We propose an algorithm, TetWild, that is unconditionally robust, requires no user interaction, and can directly convert a triangle soup into an analysis-ready volumetric tetrahedral mesh. This thesis first investigates the problem of tetrahedralizing 3D geometries represented by piecewise linear surfaces. Different from existing methods that have assumptions about the input and thus often fail on real-world input geometries, WildMeshing provides strict guarantees of termination and is a black box that can be easily integrated into any geometry processing pipelines in research or industry.

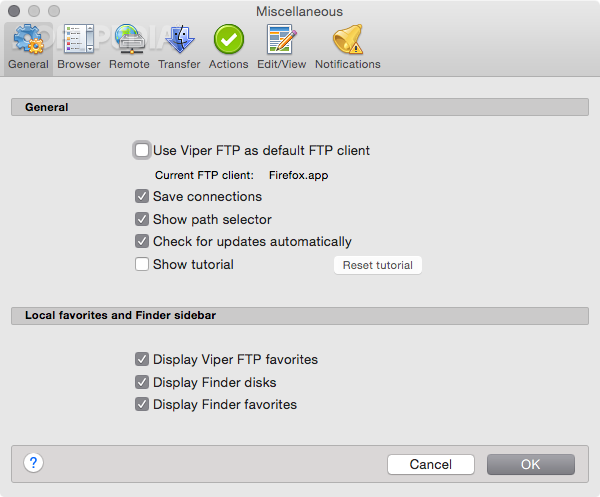

#VIPER FTP SEGMENTED SERIES#

In this thesis, we propose a series of meshing algorithms, named WildMeshing, that tackles one of the long-standing, yet fundamental, problems in geometry modeling: robustly and automatically generating high-quality triangle and tetrahedral meshes and repairing imperfect geometries in the wild. Meshes can be used in many fields, including physical simulation in manufacturing, architecture design, medical scan analysis. Title: Unstructured Mesh Generation and Repairing in the WildĪ mesh is a representation used to digitally represent the boundary or volume of an object for manipulation and analysis. These causal-aware and robust prediction models I have developed in collaboration with the World Bank and Google have shown that incorporating domain-specific structure is essential for building robust predictive models.Ģ022 Unstructured Mesh Generation and Repairing in the Wild I will demonstrate how, through these new approaches to incorporating domain knowledge, I have been able to meaningfully improve performance in four real-world applications of news-based famine forecasting, medication recommendations, causal question answering, and toxicity detection in online social media. Through methodological contributions in causal-aware ML model design, constrained optimization, counterfactual data augmentation, and feature selection, I have addressed core research questions of “What data distributions do domain practitioners care about?'', “How to faithfully convert domain knowledge into model constraints for better generalization?'' and finally ``How to evaluate whether the ML models we learn are grounded in the domain knowledge and in what ways do they deviate?''. In this talk, I will focus on the design of Domain Faithful Deep Learning Systems, that translate expert-understandable domain knowledge and constraints to be faithfully incorporated into learning robust deep learning models.

In high-stakes domains like health, socio-economic inference, and content moderation, a fundamental roadblock for relying on deep learning systems is that models' predictions diverge from established domain knowledge when deployed in the real world and fail to faithfully incorporate domain-specific structure. Title: Enhancing Robustness through Domain Faithful Deep Learning Systems